NEWS: Chatbots.org survey on 3000 US and UK consumers shows it is time for chatbot integration in customer service!read more..

Chatbots.org Business News

BRAIN: A Platform That Enables Businesses To Create Chatbots And More

| by Barend Jungerius on 5 years, 12 months ago in Business, Tools & Products, Business News |

Summary: BRAIN is basically a chatbot management system used by businesses to create, implement and update chatbots

For this series of blog posts, I interview professionals working in the exciting world of chatbots. This week I talked with Alex Galert, founder and CEO of BRAIN: a platform that enables businesses to create chatbots.

BRAIN is basically a chatbot management system: customers can create, implement and update chatbots for their business or client’s business via the BRAIN website or iOS & Android app. But there is more! It’s also a marketplace builder, webshop builder and it can be used to implement processes based on Blockchain.

Read more about: BRAIN: A Platform That Enables Businesses To Create Chatbots And More

An International Chatbots.org Survey: Consumers say No to Chatbot Silos

| by Erwin van Lun on 6 years, 2 months ago in Chatbots.org news, Chatbots.org News |

Summary: Chatbots.org report that consumers say NO to Chatbot Silos in US and UK. There is a strong need for integration!!

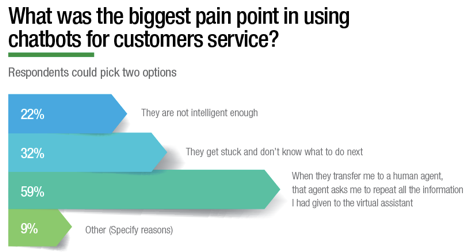

Sponsored by virtual agent vendor eGain, we are proud to present the result of the survey of 3000 consumers we carried out. It reveals that lack of integration with human-assisted service is the biggest pain point in using virtual assistants according to our panel of 3000 consumers. Download the infographics in the png report or as pdf report.

Sponsored by virtual agent vendor eGain, we are proud to present the result of the survey of 3000 consumers we carried out. It reveals that lack of integration with human-assisted service is the biggest pain point in using virtual assistants according to our panel of 3000 consumers. Download the infographics in the png report or as pdf report.

Read more about: An International Chatbots.org Survey: Consumers say No to Chatbot Silos

Chatbot which recognizes objects

| by Erwin van Lun on 6 years, 5 months ago in Agent's perception of humans, Gesture recognition, Speech recognition, Agent's Expression, Speech synthesis (TTS), Agent's Capabilities, Object Recognition, Business News |

Summary: This #chatbot for iPhone recognize objects, recognizes speech and starts on shake

Although #chatbots are popping up everywhere at the moment with all kinds of varying quality, I personally love this example. Smarty is a chatbot that appears as avatar. I like it either because of its simplicity, but especially because of its potential. It recognizes objects, it recognizes speech and gestures just on your iPhone.

Come and join me at UTTR in London Oct 3th!

| by Erwin van Lun on 6 years, 7 months ago in speaking, Chatbots.org News |

Summary: On Oct 3rd, Erwin will be on stage at the AI & Chatbot Conference in London. Why don't join come along?

These are exciting times. Once I decided to get back in business, life became a rollercoaster. A meetup group with more than 320 members, speaking on Frank Watching next week, now I’m invited to moderate a panel on the UTTR AI & Chatbot Conference in London on Oct 3th. How cool is all that?

Below you’ll find the press release. In the mean time, I keep on working on getting this website back on track and up to speed. Apologies for the hickups you may encounter but trust they will be fixed!

Read more about: Come and join me at UTTR in London Oct 3th!

Cool chatbot animation

| by Erwin van Lun on 6 years, 7 months ago in Agent's perception of humans, Eye tracking, Agent's Appearance, Business, Tools & Products, Business News |

Summary: Chatbots are here to stay! And the next step is animation on mobile phones!

Yes, Chatbots on Facebook Messenger are popular these days. But even through the developments seems quite impressive, we’ve only just begun. As soon as they start to understand our emotions a bit, be prepared for full visual interaction. These animations provided by Expressive AI show you what is already feasible today!

KLM introduces flight reservations through WeChat

| by Erwin van Lun on 6 years, 8 months ago in Applications, Back End Integration and Knowledge bases, Business News |

Summary: Airline KLM introduces payment through WeChat

Dutch Airline KLM introduces payments through WeChat in China. You simply purchase your ticket through a regular channel like web or mobile, and you choose ‘WeChat Pay’ to finish your booking (Social Media WeChat, extremely populair in China, has a built-in booking system). Immediately after your payment, your receive flight information and booking documents through WeChat, and/or you can ask a question in simplified Chinese or English, 24/7 hours a day. Wanna try? Scan the QR code below.

Read more about: KLM introduces flight reservations through WeChat

Chatbot meetup AMSTERDAM

| by Erwin van Lun on 6 years, 11 months ago in Chatbots.org news, Chatbots.org News |

Summary: On Wednesday 31th, we'll organize our very very very first Chatbot meetup. This time in AMSTERDAM

On Wednesday 31th, we’ll organize our very very very first Chatbot meetup. This time in AMSTERDAM. I’m very please to meet new people and see old faces. Remember the good old days AND look forward to a VERY exciting future!

On Wednesday 31th, we’ll organize our very very very first Chatbot meetup. This time in AMSTERDAM. I’m very please to meet new people and see old faces. Remember the good old days AND look forward to a VERY exciting future!

ChatBottle Contest: this time it is getting serious!

| by Erwin van Lun on 6 years, 11 months ago in Chatbot Battles, Award News |

Summary: The new chatbot contest 'ChatBottle' is very promising. In their very first year more than 2500 people voted!

Between January 9 to January 18 2017 more than 2500 people from 65 countries voted 5373 times for the best travel, productivity, social, e-commerce, entertainment, and news chatbots in the ChatBottle, a brand new Chatbot Challenge.

Between January 9 to January 18 2017 more than 2500 people from 65 countries voted 5373 times for the best travel, productivity, social, e-commerce, entertainment, and news chatbots in the ChatBottle, a brand new Chatbot Challenge.

The community selected 7 the best chatbots for Facebook Messenger, Slack, Kik, Telegram and Skype.

So, the 1st ChatBottle Award goes to…

instalocate chatbot Instalocate - the best Travel chatbot

meekan chatbot Meekan - the best Productivity chatbot

foxsy chatbot Foxsy - the best Social chatbot

bff-trump-for-messenger chatbot BFF Trump - the best Entertainment chatbot

chatshopper-1 chatbot chatShopper - the best E-commerce chatbot

techcrunch chatbot TechCrunch - the best News chatbot

swelly chatbot Swelly - Editors’ Choice chatbot

There were 35 chatbot nominees including the following.

Travel: Instalocate (298 votes) , Kayak (256 votes) , Hipmunk (183 votes) , Skyscanner (137 votes) , Austrian Airlines bot (130 votes) , Mica, the Hipster Cat Bot (90 votes) , Marina Alterra (50 votes)

Productivity: Meekan (355 votes) , Pepper (274 votes) , Zoom (132 votes) , Statsbot (128 votes) , Yala (119 votes) , GrowthBot (52 votes)

Social: Foxsy (354 votes) , Swelly (326 votes) , Poncho (133 votes) , Sensay (71 votes) , Visabot (45 votes)

Entertainment: BFF Trump (265 votes) , Icon8 (120 votes) , Trivia Blast (103 votes) , And Chill (94 votes) , Streak Trivia (68 votes) , COD Messenger (65 votes)

E-commerce: chatShopper (332 votes) , H&M (173 votes) , RemitRadar (74 votes) , Burberry (73 votes) , 1-800-Flowers chatbot (59 votes) , Allset Bot (51 votes)

News: TechCrunch (331 votes) , CNN (228 votes) , Wall Street Journal (85 votes) , theScore (64 votes) , NewsBytes App (55 votes)

Chatbots.org wishes this new battle lots of success

Read more about: ChatBottle Contest: this time it is getting serious!

Facebook launches Bot platform for Messenger

| by Erwin van Lun on 8 years ago in Applications, User Client Technology, Business News |

Summary: Facebook now allows businesses to integrate automated, intelligent and emotionally chatbots in Messenger

Facebook introduces bots for Messenger chatbots for the Messenger Platform. These bots can provide anything from automated subscription content like weather and traffic updates, to customized communications like receipts, shipping notifications, and live automated messages all by interacting directly with the people who want to get them.

This time, chatbots are becoming Big Business, almost at once: Every month, over 900 million people around the world communicate with friends, families and over 50 million businesses on Messenger. It’s the second most popular app on iOS, and was the fastest growing app in the US in 2015.Now business can engage with their customer in a personalised, emotional and way and is available 24/7.

Read more about: Facebook launches Bot platform for Messenger

Nadine is a very human like robot

| by Erwin van Lun on 8 years, 2 months ago in Agent's Appearance, Humanoids, Research News |

Summary: Nadine, developed by scientist in Singapore, is a real virtual human, a human-like robot.

Scientists at the Nanyang Technological University, Singapore have unleashed their latest creation on the university’s campus: the world’s most human-like robot.

Nadine, as the robot is called, has soft skin and medium brunette hair just like her creator, Professor Nadia Thalmann. But more than her looks, the most impressive thing about Nadine is what she does: make eye contact, smile, meet and greet guests, shake hands, and even recognise past visitors and engage in conversation with them based on previous exchanges.

Unlike previous generations of robots, Nadine has an individual personality, with moods that change depending on the topic.